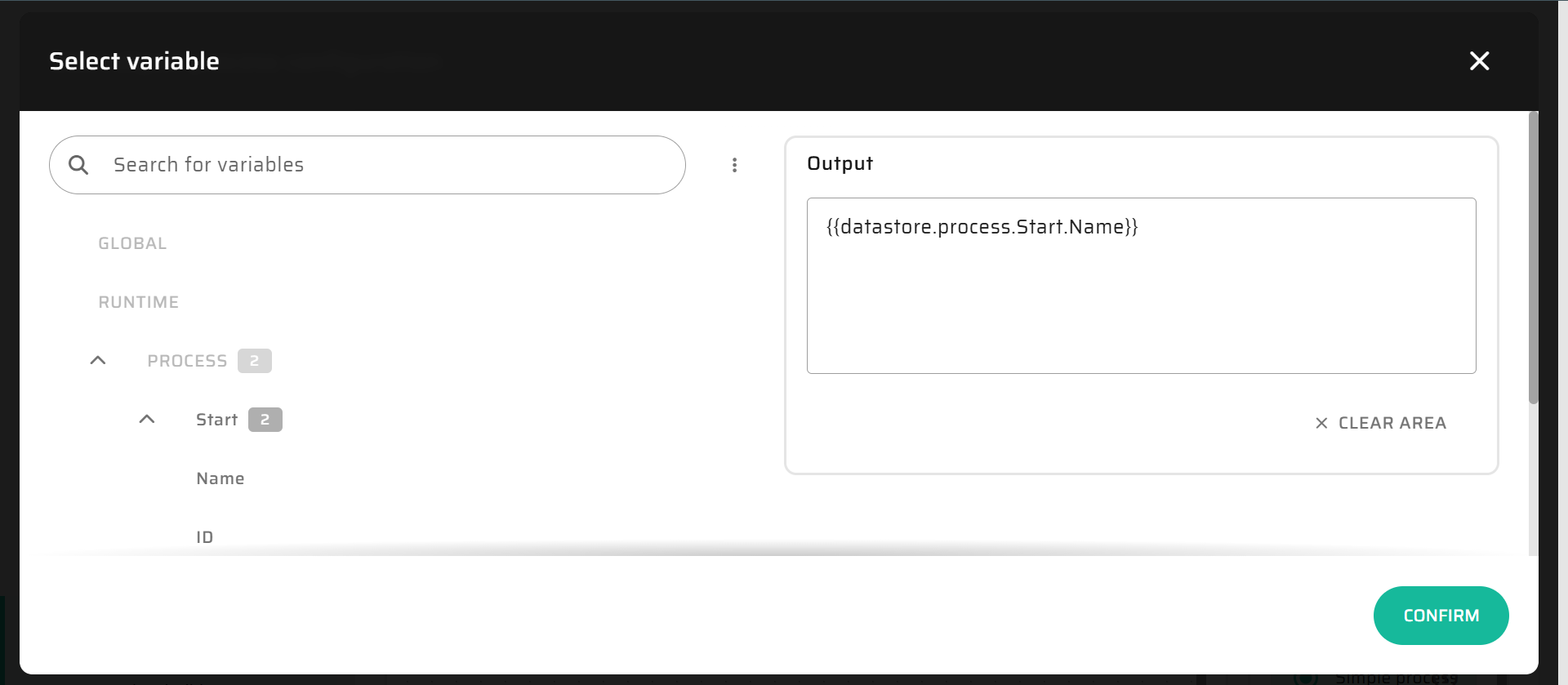

DataStore

While Process Variables define what data exist in a Flow, the DataStore defines where they live and how they behave during execution.

Every variable - whether input, intermediate, or output - ultimately resides in the DataStore. It is the in-memory workspace where variables, objects, and collections are stored, manipulated, and passed between nodes.

If variables are the nervous system of a Flow, the DataStore is its central brain: it maintains current state, remembers what has changed, and decides what should be saved when the process ends.

Concept and Purpose

The DataStore serves as the temporary in-memory layer that keeps process data consistent, isolated, and transactional.

It ensures that:

All process operations occur in memory before touching the database.

No partial writes or corrupt states can occur if the Flow fails.

Developers and analysts can safely debug and inspect process logic without risk to live data.

Essentially, it’s a controlled simulation of the database that becomes real only after successful process completion.

Lifecycle and Interaction

Initialization:

When a Flow starts, the system creates a fresh DataStore and loads all input variables into it. These can come from Widgets, Layouts, APIs, or Artoo commands.Execution:

As nodes execute, they continuously read and write to the DataStore:Load Object nodes pull records from the database into memory.

Assignment nodes modify or enrich variable values.

Create/Update/Delete nodes mark objects for later persistence - they don’t yet touch the database.

The DataStore therefore always contains the current truth of the process: every variable, object, and flag representing what the Flow knows so far.

Completion:

At the End Node, all data in the DataStore marked for persistence are written to the database in one controlled step.

This guarantees that if the Flow fails at any point before completion, the database remains untouched and consistent.

After a successful commit, the DataStore is cleared, freeing memory and closing the process context.

In-Memory vs Persistent Storage

The P4 architecture strictly separates execution from persistence:

Phase | Location | Description |

|---|---|---|

Flow Execution | In-memory (DataStore) | Fast, isolated environment where variables and data objects evolve. |

Data Persistence | Database | Final, permanent storage applied only after successful Flow completion. |

This separation enables safe experimentation during development, transactional integrity during runtime, and high transparency during debugging.

Data Saving and Transactions

When the Flow reaches its End Node, the platform evaluates the DataStore contents and executes persistence in several stages:

Evaluation: Identify all records tagged as “created,” “updated,” or “deleted.”

Transaction Start: If enabled, the system opens a single transaction scope.

Write Operations: Each marked object is persisted to the database.

Commit or Rollback:

If all operations succeed, the transaction commits and changes become permanent.

If any step fails, all operations roll back, leaving the database unchanged.

This approach ensures that even multi-entity processes behave atomically - all or nothing.

Debugging and Inspection

The DataStore is also the main tool for understanding process behavior.

When running a Flow manually from the builder, users can:

Examine input and output variables at any step.

Inspect DataStore snapshots after each node.

Review execution logs to trace how variables evolved.

This transparency allows testing logic thoroughly before linking it to UI actions or deploying it in production.

Best Practices

Treat the DataStore as your single source of truth during execution. Avoid mixing in external temporary states.

Keep Flows stateless across runs - each execution should start with a clean DataStore.

Use the DataStore for data transformation and decision-making, not for long-term caching.

Write to the database only at the End Node unless explicitly using asynchronous or background processes.

Regularly inspect DataStore contents when debugging or optimizing performance.

Summary

The DataStore is the operational core of every Flow - a secure, transient workspace where Process Variables live and logic unfolds.

By decoupling in-memory execution from database persistence, it enables safe iteration, transactional accuracy, and transparent debugging.

Together, Process Variables and the DataStore form the living layer of the P4 Process Builder - one defines the data, the other ensures it moves and survives correctly.